MNIST dataset

MNIST ‘s full name is Mixed National Institute of Standards and Technology database.It contains 60000 sample data and 10000 test samples. The sample is a 28×28 grayscale image,with the grayscale values ranging from 0 to 255. 0 represents pure black and 255 represents pure white.

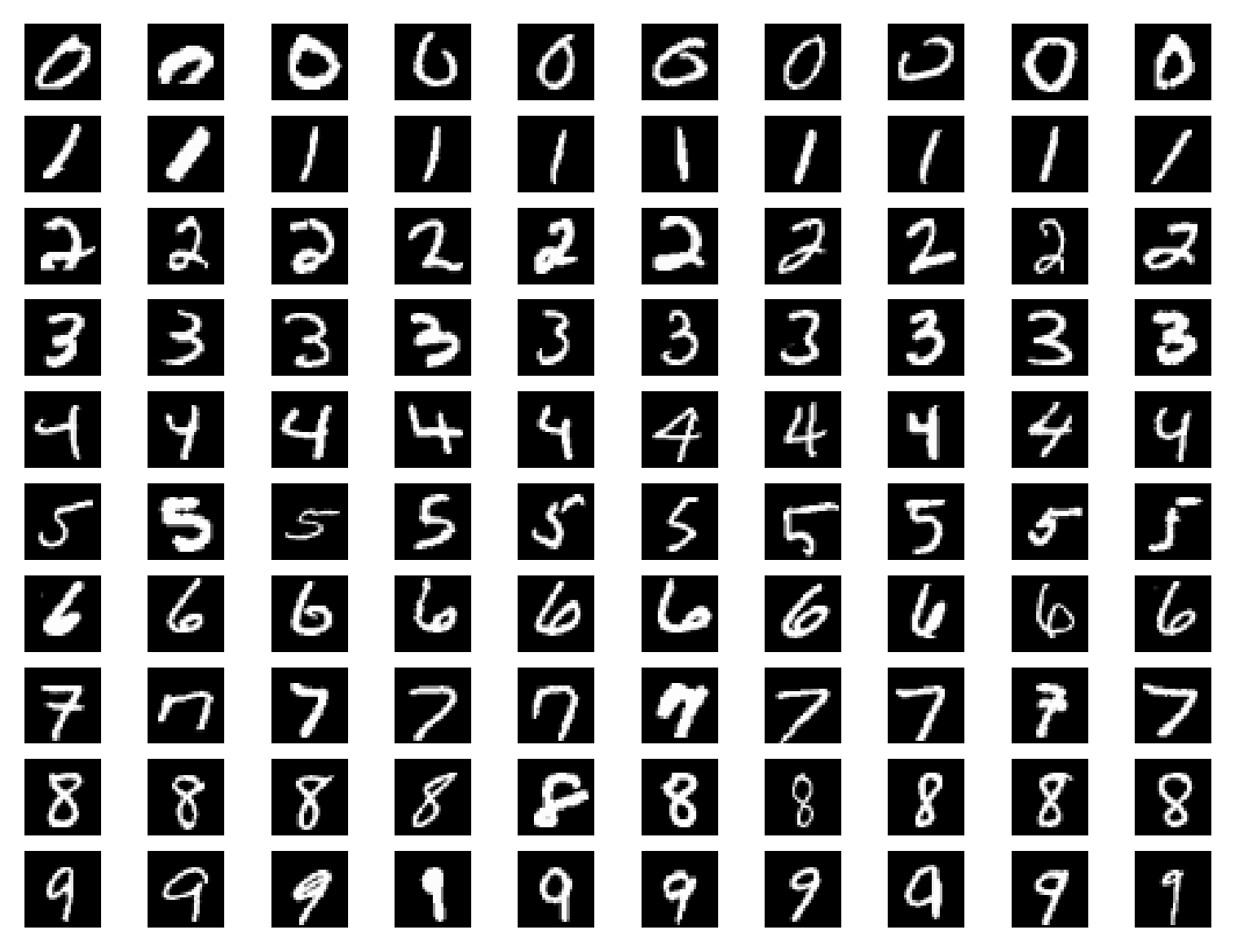

MNIST is a dataset that has already benn labeled,so when you use it,you don’t need to label it.This following picture is an example of the MNIST dataset:

Softmax activation function

softmax funtion can as the activation function of the output layer of the network.It can perform nromalization on the output values,converting all the output values into probabilities,and the sum of all the probability value equals 1.The formula of the softmax function is:

Here is a specific example.

A certain neural network has 3 output values:[1,5,3].

We can calculate

so through the process of the softmax function,the output values become:[0.016,0.867,0.117].These output values represent the probability of the occurrence of data at array indices.

MNIST program

import numpy as np

import torch

from torchvision import datasets, transforms

from torch import nn,optim

from torch.autograd import Variable

from torch.utils.data import DataLoader

train_dataset = datasets.MNIST(root='./data',

train=True,

download=True,

transform=transforms.ToTensor())

test_dataset = datasets.MNIST(root='./data',

train=False,

download=True,

transform=transforms.ToTensor())

#批次大小(每次训练传入多少数据)

batch_size = 64

#裁剪数据集

train_loader = DataLoader(train_dataset,

batch_size=batch_size,

shuffle=True)

#裁剪测试集

test_loader = DataLoader(test_dataset,

batch_size=batch_size,

shuffle=True)

#定义网络结构

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.fc1 = nn.Linear(28*28, 10)

self.softmax = nn.Softmax(dim=1)

def forward(self,x):

#(64,1,28,28)->(64,28*28)

x = x.view(x.size()[0],-1)#二维->一维

x = self.fc1(x)

x = self.softmax(x)

return x

#定义模型

model = Net()

#定义代价函数

mse_loss = nn.MSELoss()

#定义优化器

optimizer = optim.SGD(model.parameters(),0.5)

def train():

for i,data in enumerate(train_loader):

#获得一个批次的数据和标签

inputs,labels = data

#获得模型预测结果

out = model(inputs)

#to onehot 把数据标签变成独热编码

#(64)->(64,1)

labels = labels.reshape(-1,1)

one_hot = torch.zeros(inputs.shape[0],10).scatter(1,labels,1)

#计算loss,mse_loss两个数组的shape要一致

loss = mse_loss(out,one_hot)

#梯度清零

optimizer.zero_grad()

#计算梯度

loss.backward()

#修改权值

optimizer.step()

def test():

correct = 0

for i,data in enumerate(test_loader):

inputs,labels = data

out = model(inputs)

#获得模型预测结果(64,10)

#获得最大值,以及最大值所在位置

_,predicted = torch.max(out,1)

#

correct += (predicted==labels).sum()

print("Test acc:{0}".format(correct.item()/len(test_dataset)))

for epoch in range(10):

print("epoch:",epoch)

train()

test()